Something strange is happening to your marketing data. You’re spending the same amount on ads, maybe even more, but your dashboards are telling conflicting stories. Google Analytics 4 says you had 50 conversions last month. Facebook Ads Manager claims 75. Your actual sales? Somewhere in between, but you’re not sure which number to trust anymore.

This isn’t a glitch in your setup. It’s not a problem with your pixel installation. What you’re experiencing is called “signal loss”, and it’s quietly eroding the foundation of digital marketing for businesses like yours.

What’s happening

Browsers, privacy laws, and users rejecting consent banners are systematically stripping the data your ad platforms need to attribute conversions. Somewhere between 30 and 50% of your actual conversions are never reported back to the platforms spending your money.

Why it matters

When ad platforms do not receive data on which clicks led to sales, they lose the ability to find the people most likely to buy. Your costs go up because the targeting gets worse. On top of that, conversions that do happen often go unrecorded in your dashboards, leaving you making budget decisions based on incomplete numbers. You are essentially guessing what is working and what is not.

What to do about it

Server-side tracking, Consent Mode v2, and Enhanced Conversions recover lost signal at the infrastructure level. Shifting your primary KPI from platform-reported ROAS to Marketing Efficiency Ratio (total revenue divided by total ad spend) gives you a number that is immune to attribution gaps. The rest of this post explains each of these in detail.

Table of Contents

The Disconnect Between What You Spend and What You See

Let’s rewind to how digital advertising used to work. Between 2010 and 2020, the system was straightforward and reliable. Someone clicked your ad, a third-party cookie landed on their device, and when they bought something within 30 days, a pixel fired to confirm it happened. The connection between your ad spend and your revenue was crystal clear. You could calculate your Return on Ad Spend (ROAS) down to the penny.

That era is over.

Today, business owners are reporting what feels like a “black box” problem. Your ad platforms show one set of numbers. GA4 shows completely different numbers, sometimes 50% lower. Meanwhile, you’re looking at your bank account wondering which dashboard is telling you the truth.

This creates a specific type of anxiety. You’ve lost your “source of truth.” In the past, you could open Google Analytics and trust what you saw. Now you’re dealing with race conditions where consent tags fire too late and miss conversions entirely. Or scenarios where only “strictly necessary” cookies are working, leaving your marketing attribution completely dark.

The result is that you’re spending money on audiences you cannot see and optimising campaigns based on signals you cannot verify. You’re flying blind.

What Signal Loss Actually Means

Signal loss is the technical term for what happens when data degrades as it travels from a user’s device, through their browser’s privacy filters, to your marketing dashboard. Think of it like a game of telephone, except crucial information is being actively removed along the way.

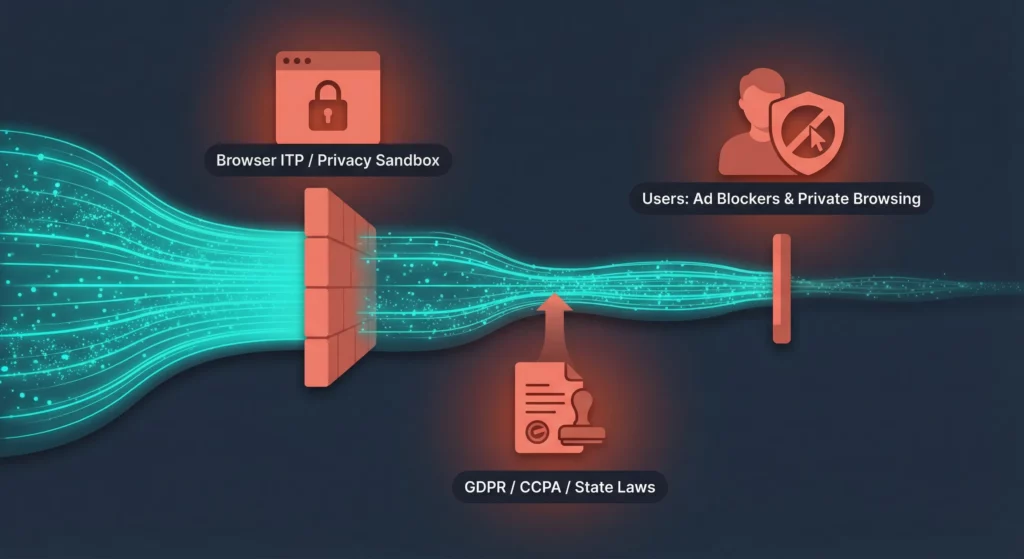

When someone interacts with your ad, multiple signals get generated: a click ID, their IP address, device type, and browsing context. In today’s privacy-focused web, these signals are systematically intercepted and stripped by three forces working against you.

First, browsers themselves are blocking tracking. Safari's Intelligent Tracking Prevention (ITP) and Chrome’s Privacy Sandbox initiatives have moved from passive observation to active interference. Second, privacy regulations like GDPR, CCPA, and new US state laws require explicit consent, turning data collection from an automatic default into a hurdle you have to clear with every visitor. Third, users themselves are increasingly refusing consent banners and installing ad blockers.

The consequence is stark: 30-50% of your actual conversions are never reported back to your ad platform. This breaks the algorithmic learning process that modern advertising depends on. Instead of knowing what’s working, the algorithms are forced to guess.

The Ghosting Problem

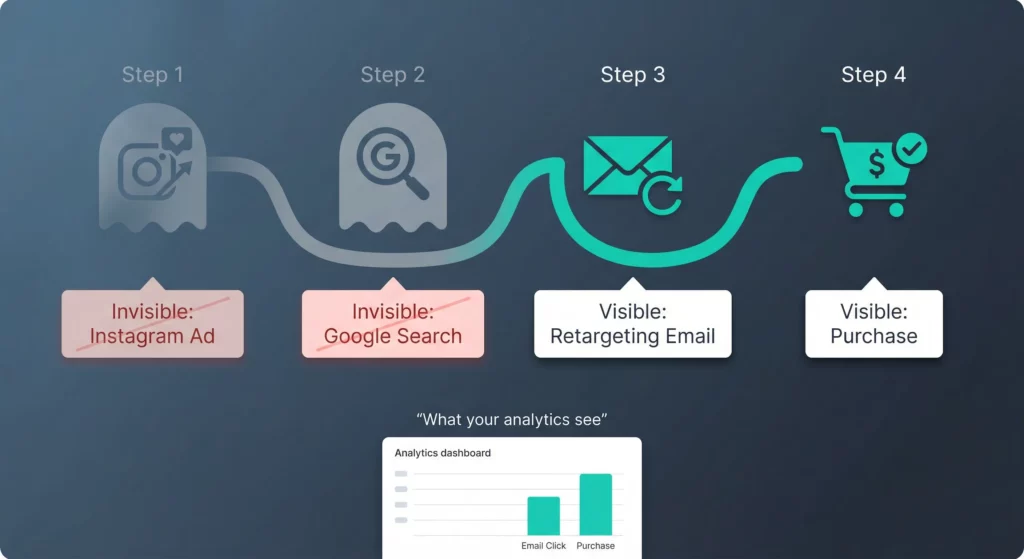

Here’s how signal loss plays out in practice. A customer discovers your business through an Instagram ad, searches for you on Google a week later, clicks a retargeting email, and finally makes a purchase. In a signal-rich environment, your attribution model would credit each of these touchpoints. You’d see the full customer journey.

In a signal-poor environment, that customer appears to materialise out of nowhere. They show up in your dashboard as Direct traffic or get attributed solely to that final email click. The Instagram ad that started everything? Invisible. The Google search that kept you top of mind? Gone.

This invisibility leads you to dangerous conclusions. You look at your dashboard and see that Facebook ads have a ROAS of 1.2x (losing money), while Direct traffic has a ROAS of 10x (wildly profitable). The logical decision seems obvious: cut Facebook spend and double down on whatever is driving that direct traffic.

This decision would be strategically fatal. That “direct” traffic was likely driven by the Facebook ads you’re about to cut. The ads are working, but iOS privacy settings are stripping the tracking parameters, so the conversions appear unattributed. When you cut the “unprofitable” channel, you kill the source of your “free” traffic. Your overall revenue collapses, and you have no idea why.

This is the flying blind trap, and businesses fall into it every day.

Why This Is Happening Now

The narrative you’ve probably heard is that “cookies are dead” or “cookie apocalypse is coming.” This oversimplifies what’s actually happening. The reality is more complex and more immediate.

The Chrome User Choice Trap

For years, the industry prepared for a single catastrophic event: Google Chrome removing all third-party cookies. Businesses planned upgrade cycles around this deadline. Then in mid-2025, Google announced a major pivot. Instead of removing cookies entirely, they would introduce a “User Choice” model.

Many business owners interpreted this as good news. A reprieve. Cookies saved.

This interpretation is dangerously wrong.

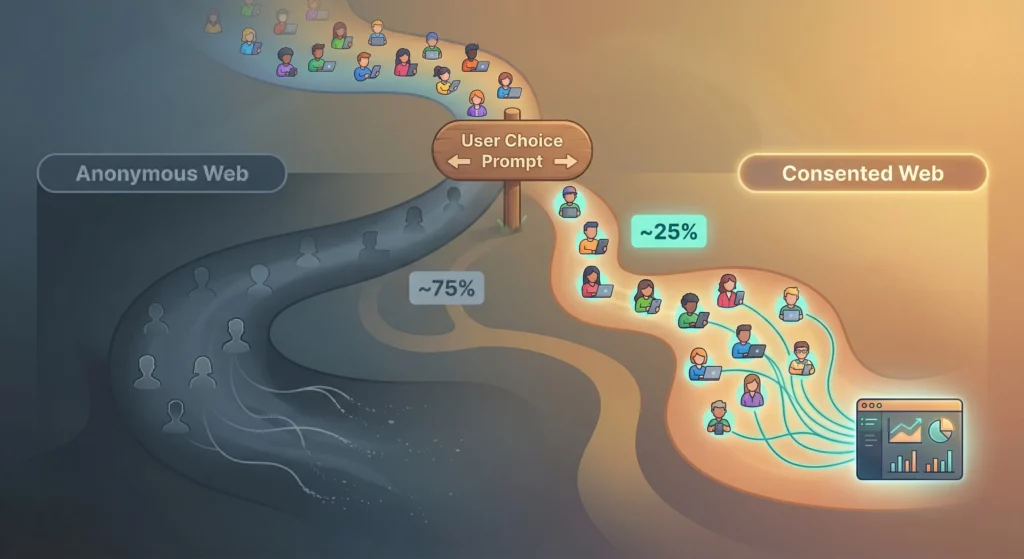

Chrome’s “User Choice” model fundamentally changes how data collection works by making users actively decide whether to allow tracking. Google’s implementation mirrors what Apple did with App Tracking Transparency (ATT) on iOS, which devastated Meta’s ad revenue in 2021. When users are presented with a binary choice between “allow tracking” or “protect privacy,” research suggests 70-80% choose to opt out.

Here’s why this matters for your business. You’re operating under a false sense of security. If you believe “cookies are saved” and don’t upgrade your infrastructure, you’re setting yourself up for a massive visibility drop as users encounter this prompt and overwhelmingly opt out.

The web is splitting into two distinct groups. There’s a “consented web” made up of a minority of users (likely older, less tech-savvy) who allow tracking. Then there’s an “anonymous web” consisting of a silent majority who opt out and become invisible to traditional tracking pixels.

This creates a data bias problem. Your analytics will heavily skew towards the consented cohort, giving you a distorted view of who your customers actually are. If your “consented” audience is predominantly 60+ years old, your advertising algorithms will optimise for that demographic. Meanwhile, you’re completely blind to the younger, privacy-conscious audience that might actually represent your core market.

Safari’s Silent Assassination of Attribution

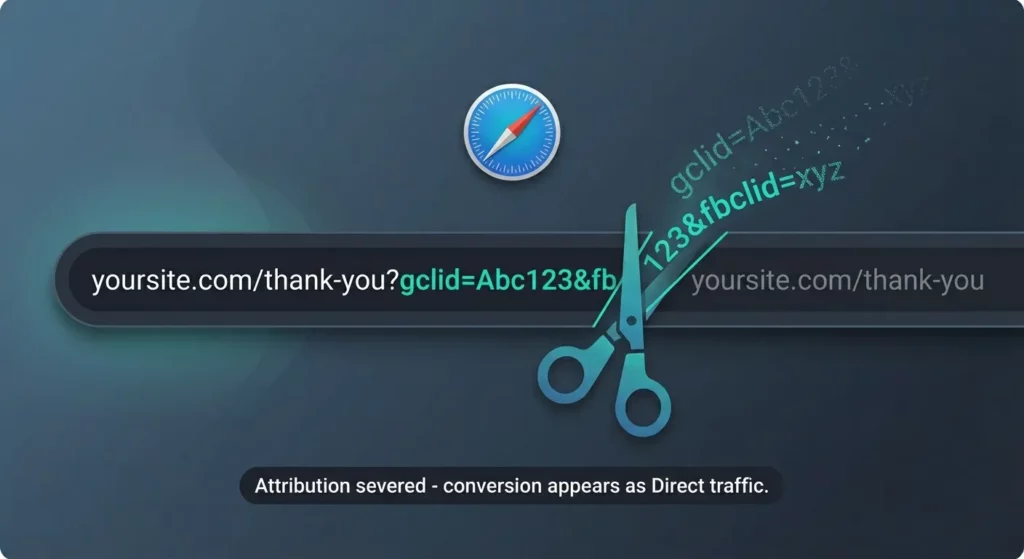

While Chrome takes a passive-aggressive “user choice” approach, Apple’s Safari has declared open war on marketing attribution. With Safari 26 and iOS 26, Apple expanded its Advanced Fingerprinting Protection (AFP) and Link Tracking Protection (LTP).

Here’s the mechanism that’s breaking your tracking. Marketing attribution relies on “decorating” URLs with unique identifiers. When someone clicks your ad, the platform appends a parameter to the URL. Google adds ?gclid=123 (the Google Click ID). Facebook adds ?fbclid=abc (the Facebook Click ID). This ID is the golden thread connecting that ad click to a future purchase.

Safari 26 identifies these known tracking parameters and strips them out in Private Browsing mode, Mail, and Messages. When someone clicks your ad link in an iPhone text message, the gclid disappears before they even reach your website.

Let me be clear about how devastating this is. When that click ID is removed, the connection between your ad spend and your revenue is permanently severed.

| Parameter | Function | Safari 26 Impact | Consequence for Your Business |

|---|---|---|---|

gclid | Google Ads Click ID | Stripped in protected modes | Auto-tagging fails; conversions cannot be linked to specific keywords; “Direct” traffic spikes; Smart Bidding breaks down |

fbclid | Meta Click ID | Stripped in protected modes | Attribution broken; cannot build custom audiences from clicks; Lookalike audiences degrade |

utm_source | Analytics Source | Generally preserved | High-level reporting (Source/Medium) remains intact, but granular attribution (Campaign/Ad Content) often lost |

msclkid | Microsoft Ads ID | Stripped in protected modes | Attribution broken; ROAS visibility lost |

Safari commands over 50% of the mobile browser market share in the US. This stripping effectively blinds you to half of your mobile traffic. The user arrives at your site and converts, but the gclid is gone. The conversion gets recorded as Direct traffic, and your ad campaign appears to have failed completely.

This forces you to rely on metrics like Click-Through Rate (CTR) as a proxy for success. But CTR is a vanity metric that has no reliable correlation with profitability. A high CTR with broken attribution just means you’re paying for clicks you cannot connect to revenue.

The “Cookieless” Misunderstanding

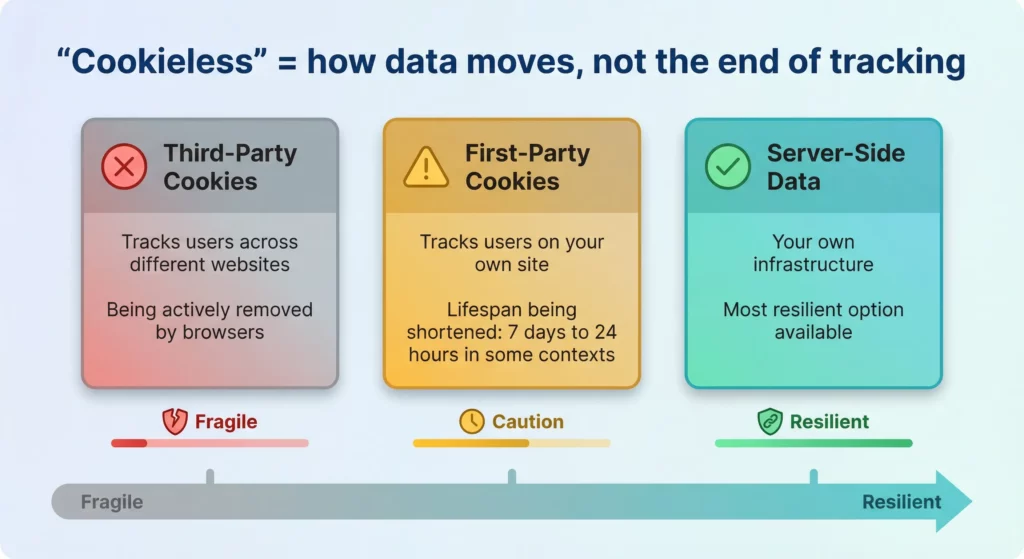

There’s a critical gap in how most business owners understand the term “cookieless.” Many interpret it to mean cookies are illegal or completely unavailable. This isn’t accurate.

“Cookieless” refers to how data is transported (server-side instead of client-side) or how it’s modelled (probabilistic instead of deterministic). It doesn’t mean the total absence of tracking capability.

The shift happening is from “client-side reliance” (trusting the browser to handle everything) to “server-side resilience” (owning your data infrastructure). When business owners ask about “workarounds,” they’re often asking about legitimate architectural upgrades like Server-Side Tracking without knowing the proper terminology.

Understanding the distinction between Third-Party Cookies (which track users across different websites) and First-Party Cookies (which track users on your own site) is vital. Safari and Chrome are attacking third-party cookies. First-party cookies remain viable, but their lifespan is being drastically shortened.

Safari’s ITP deletes first-party cookies after 7 days in some contexts and as quickly as 24 hours in others. This makes tracking “consideration cycles” nearly impossible. If a customer clicks your ad on Monday but purchases on Friday (outside that 24-hour window), the attribution is lost. The sale happens, but you have no idea which ad drove it.

How Signal Loss Destroys Your Profitability

Let’s connect the technical explanation to your bottom line. Signal loss isn’t just an IT problem or a tracking inconvenience. It directly inflates your Cost Per Acquisition (CPA) and artificially depresses your ROAS. It’s a profit and loss statement problem.

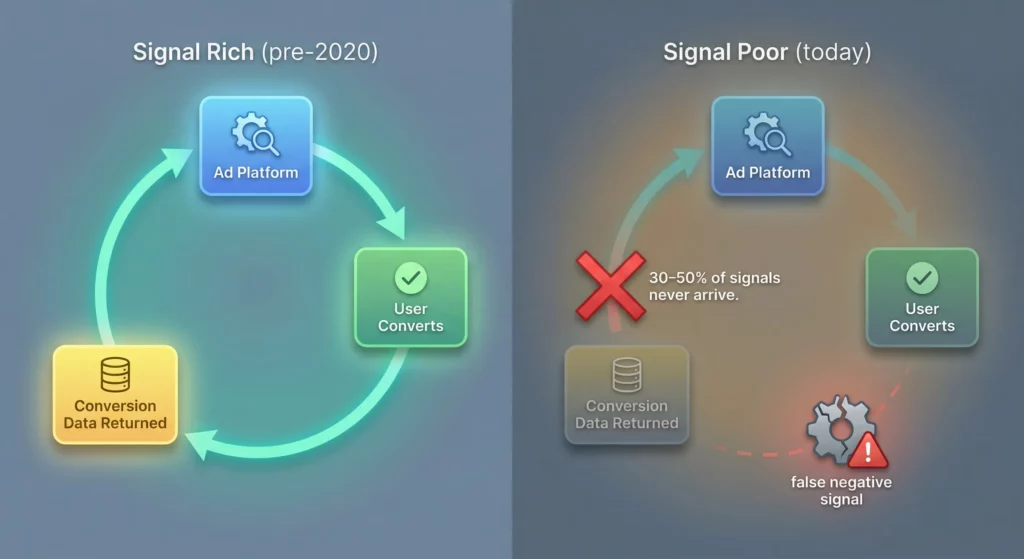

The Broken Feedback Loop

Modern advertising platforms like Google Ads, Meta, and TikTok are built on machine learning algorithms. These algorithms require a continuous stream of success signals to function properly. The system works like this: the platform bids on showing your ad to a user, the user converts, and that conversion data feeds back to the algorithm. The algorithm learns from this success and seeks out mathematically similar users to show your ads to next.

Signal loss breaks this feedback loop in a devastating way.

Here’s what happens. Due to consent refusals or Safari stripping parameters, 30-50% of your actual conversions never get reported back to the ad platform. The algorithm receives what’s called a false negative signal. It thinks the ads are failing even though they’re generating real revenue in your bank account.

This creates a downward spiral. The algorithm stops bidding on high-value audiences because it cannot “see” them converting. To compensate, the system drifts towards lower-quality inventory or extremely broad audiences in a desperate attempt to find “easy” conversions that are visible. Your CPA inflates because efficiency drops and targeting degrades.

Data from Triple Whale’s analysis of Black Friday Cyber Monday 2024 showed that while ad spend remained high across the board, CPA was rising on platforms like Google Ads due to precisely this loss of signal fidelity. Businesses were paying more to acquire each customer not because competition increased, but because their targeting mechanisms were operating partially blind.

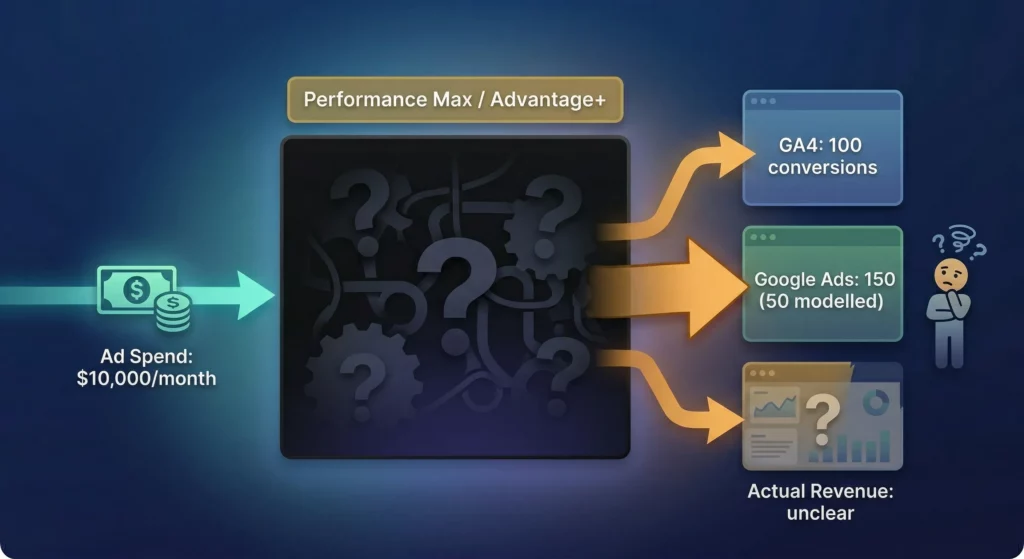

The Black Box Problem

As deterministic data disappears, platforms are pushing automated “black box” solutions like Performance Max (PMax) and Advantage+. These tools rely heavily on modelled data to fill the gaps left by missing signals.

This creates a specific trust problem. When GA4 reports 100 conversions but Google Ads reports 150 (with 50 of those marked as “modelled”), you’re left questioning which number reflects reality. Are you actually profitable, or are you looking at algorithmic hallucination?

Without a strong foundation of first-party data to calibrate these models, the output becomes increasingly speculative. A study examining 24,702 PMax campaigns revealed that while automation was widely adopted, advertisers consistently reported frustration with “flying blind.” There were no channel-level insights to explain why performance fluctuated from week to week.

This lack of transparency makes it impossible to iterate on strategy. If PMax works one month, you don’t know why it worked, so you cannot replicate it. If it fails the next month, you don’t know how to fix it. You’re stuck in a black box, pulling levers with no visibility into the machinery.

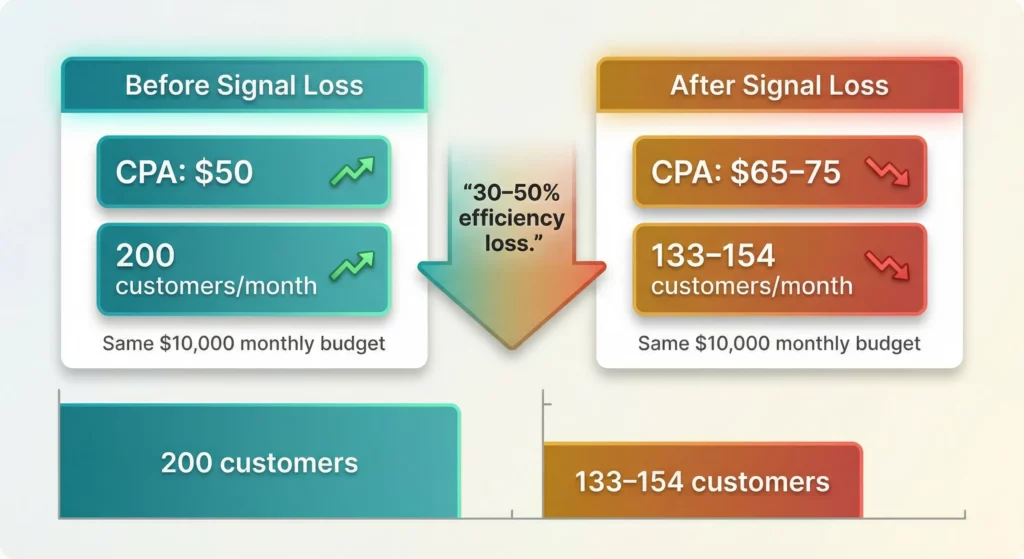

Quantifying the Cost of Blindness

Research across multiple industries indicates that businesses failing to adapt to signal loss are facing a 30-50% increase in CPA. This isn’t a small efficiency loss. For a business spending $10,000 per month on ads with a previous CPA of $50, this increase means you’re now paying $65-$75 per customer for the same result. Your monthly customer acquisition drops from 200 customers to 133-154 customers for the same budget.

The problem compounds when you factor in the inability to distinguish between new and returning customers. When cookies get deleted, a customer who bought from you two weeks ago might re-enter your funnel and trigger your retargeting ads. You pay to advertise to someone who was already going to buy again. This type of waste used to be minimal because cookies persisted. Now it’s a significant drain.

The ghosting of revenue attribution means you’re also misallocating credit for sales. Profitable campaigns get defunded because they appear ineffective in dashboards. Meanwhile, you might be over-investing in channels that are merely capturing demand created by other touchpoints.

Recent data from Gartner predicts that 50% of consumers will significantly limit their social media interactions by 2025 due to perceived platform quality decay. This social media decline, combined with signal loss, creates a perfect storm. Your audiences are harder to reach, harder to track, and more expensive to acquire. The cost of customer acquisition is rising not just because of ad price inflation, but because the efficiency of the entire targeting mechanism is breaking down.

The Regulatory Reality You Need to Understand

The legal environment around data privacy is often viewed as a static compliance hurdle. Get a cookie banner, tick the box, move on. This perspective misses the strategic reality of 2025: privacy laws now directly dictate your data quality and advertising effectiveness.

How Privacy Laws Have Evolved

GDPR set the initial framework in 2018, but the landscape has become significantly more complex. Recent US state laws have added layers of requirements that affect businesses regardless of where they’re based. The Colorado Privacy Act (CPA) amendments and new laws in Maryland and other states require rigorous data minimisation and explicit consent, particularly for sensitive data.

The updated Colorado Privacy Act (effective late 2025) introduces strict requirements for “universal opt-out mechanisms” and heightened protections for minors. Even if your business isn’t based in Colorado, you likely need to adhere to these standards if you sell to US consumers. The patchwork of state laws makes it operationally difficult to segregate users by state, so the practical approach is to meet the strictest standard everywhere.

These laws aren’t just about avoiding fines. They’re about whether you have usable data at all. If you cannot demonstrate valid consent, that data is toxic. You cannot use it for modelling, retargeting, or algorithmic training without exposing yourself to legal risk.

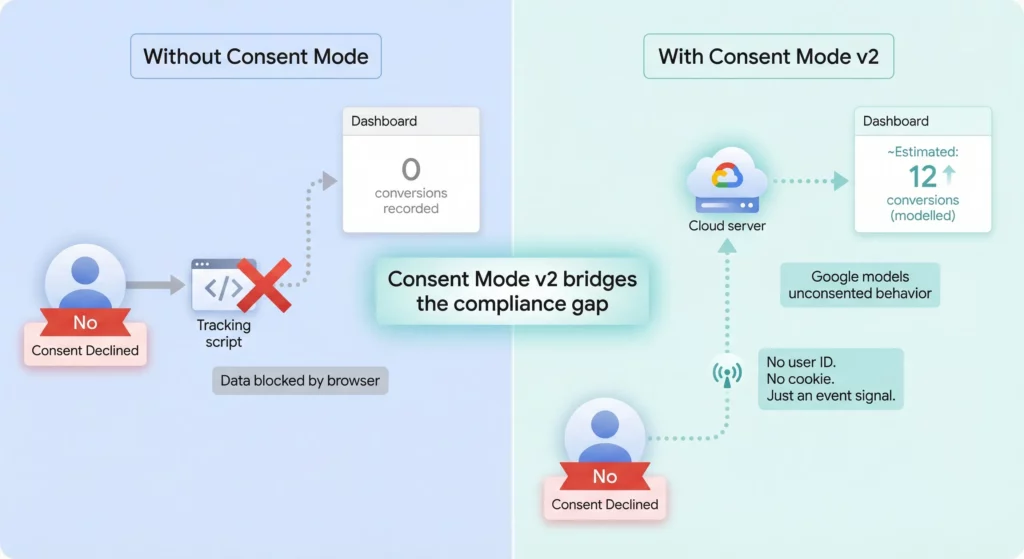

Consent Mode v2: Your Data Recovery Engine

One of the most significant and misunderstood developments is Google's Consent Mode v2. Most businesses view it as just another compliance checkbox. This perspective completely misses its strategic value. Consent Mode v2 is fundamentally a data recovery tool.

Here’s how it works. In a traditional setup, when a user declines cookies, your tracking scripts get blocked entirely. The data is lost. You see nothing. Consent Mode v2 changes this binary outcome.

It allows your website to send “cookieless pings” to Google servers even when consent is denied. These pings contain no identifying information. There’s no user ID, no cookie ID. But they convey that an event happened: a page view, an add-to-cart action, a conversion.

The strategic breakthrough comes from how Google uses these anonymous pings. GA4 uses them to model the behaviour of unconsented users based on the observed behaviour of consented users. Think of it like weather forecasting. You have accurate measurements from some weather stations (consented users) and use those to model what’s happening in areas without stations (unconsented users).

| Scenario | Tracking Mechanism | Data Result | Marketing Impact |

|---|---|---|---|

| No Consent Mode | User rejects cookies → Scripts blocked | 0 data recorded | Marketing appears 50% less effective; CPA doubles |

| With Consent Mode v2 | User rejects cookies → Anonymous ping sent | Google models the session | Marketing data recovers ~70% of visibility; trends preserved |

This modelling bridges the gap between strict compliance and marketing visibility. You’re not tracking users who opted out. You’re using aggregate patterns to fill in the blind spots.

For businesses operating in the European Economic Area and UK, Consent Mode v2 is effectively mandatory if you want to continue using audience features in Google Ads. Ignoring this requirement results in the complete loss of remarketing capabilities and severe degradation in conversion tracking. Google has been explicit about this: upgrade or lose access to critical advertising features.

The Consent Paradox

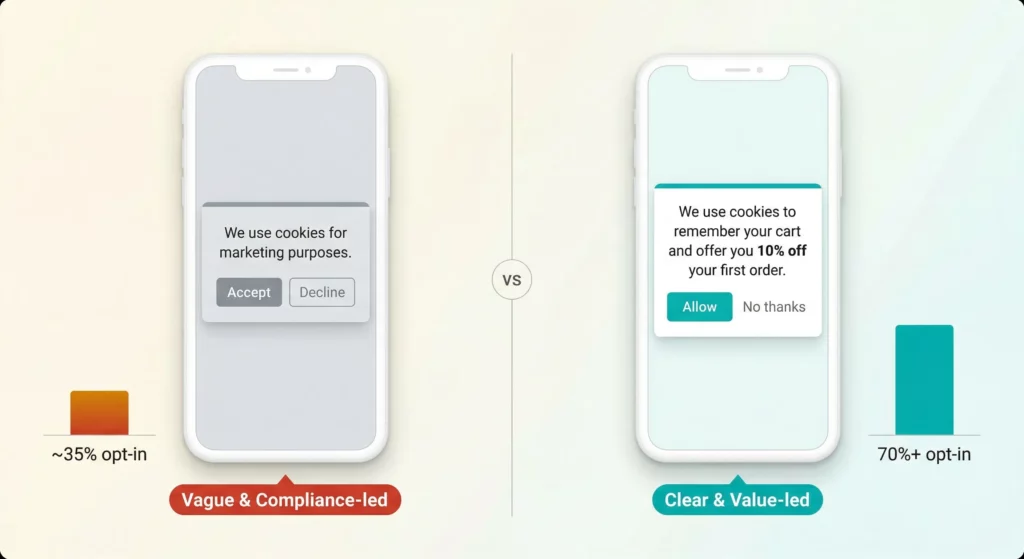

Here’s something most businesses miss: the design of your consent banner directly determines how much data you can collect. A banner that’s technically compliant but poorly designed can result in opt-in rates as low as 30-40%. An optimised banner that uses clear value propositions and user-friendly design can achieve 70%+ opt-in rates without resorting to illegal “dark patterns.”

The conversation needs to shift from “Do I legally need this?” to “How do I optimise this for trust?” High consent rates are your first line of defence against signal loss.

The value exchange must be explicit. Users are willing to share data if they understand what they get in return: personalisation, discounts, convenience, better recommendations. If your consent prompt is merely legalese that says “We use cookies to improve your experience” with no specifics, the default response is “No.”

Consider testing banners that say “We use cookies to remember your cart and offer you 10% off your first order” versus “We use cookies for marketing purposes.” The first version tells users exactly what they gain. The second version sounds like surveillance.

Building a Resilient Measurement Architecture

Recovering from signal loss requires upgrading your fundamental infrastructure. The era of the simple “client-side pixel” is ending. The new standard is “server-side resilience” and “first-party data ownership.”

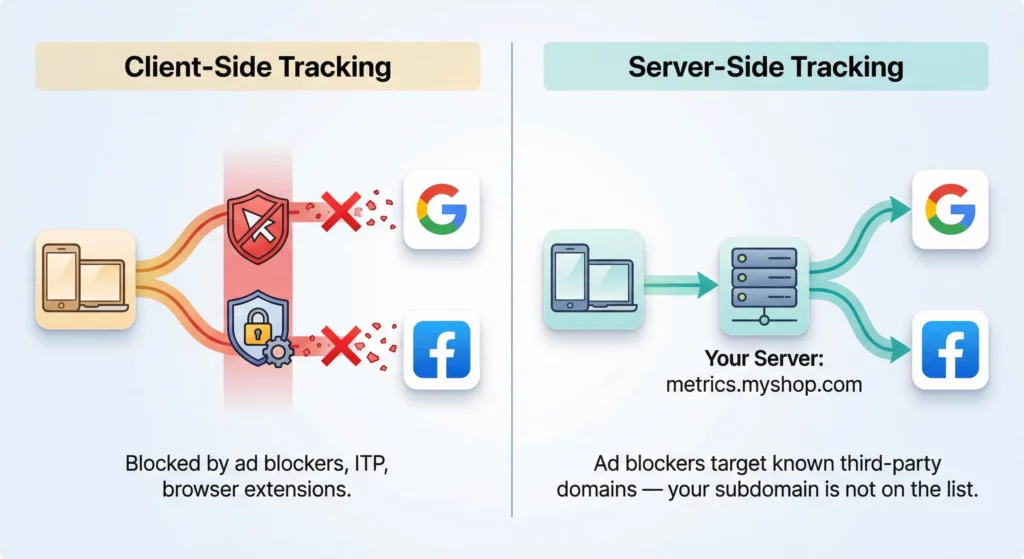

Server-Side Tracking: The Invisible Upgrade

Standard client-side tracking works like this: when someone visits your site, their browser sends data directly to Facebook or Google. This path is fragile. It’s easily blocked by ad blockers, Safari’s ITP, browser extensions, and network-level filters.

Server-Side Tracking (SST) moves the data processing from the user’s volatile device to a stable server that you control. Instead of the user’s browser talking directly to Facebook, it talks to your server first. Your server then decides what data to send to Facebook and when.

Here’s why this matters for your business. First, you bypass ad blockers. The data request goes to your own subdomain (something like metrics.myshop.com) rather than a known third-party tracker (like google-analytics.com). Ad blocking lists target known tracking domains. Your own subdomain isn’t on those lists.

Second, you gain cookie control. Servers can set HTTP-only cookies that are more secure and resistant to some browser expiration limits. Safari continues to close loopholes, but server-side cookies are more resilient than client-side ones.

Third, you control data governance. Your business decides exactly what data to share with Facebook or Google, rather than allowing the pixel to scrape everything on the page. This is crucial for privacy compliance and user trust.

The ROI reality check: Server-Side Tracking isn’t free. It requires server infrastructure through services like Google Cloud or Stape, which incur monthly costs typically ranging from $30-$200+ depending on traffic volume and configuration. For very small businesses, the cost and technical complexity might outweigh the benefits.

However, for businesses spending more than $5,000 per month on ads or experiencing data discrepancies above 20%, this is a necessary investment. The cost of doing nothing is the permanent loss of 20-30% of your conversion data. If you’re spending $5,000 monthly and losing 25% of conversions, that’s $1,250 worth of invisible results. Over a year, that’s $15,000 in untrackable performance. The server infrastructure pays for itself many times over.

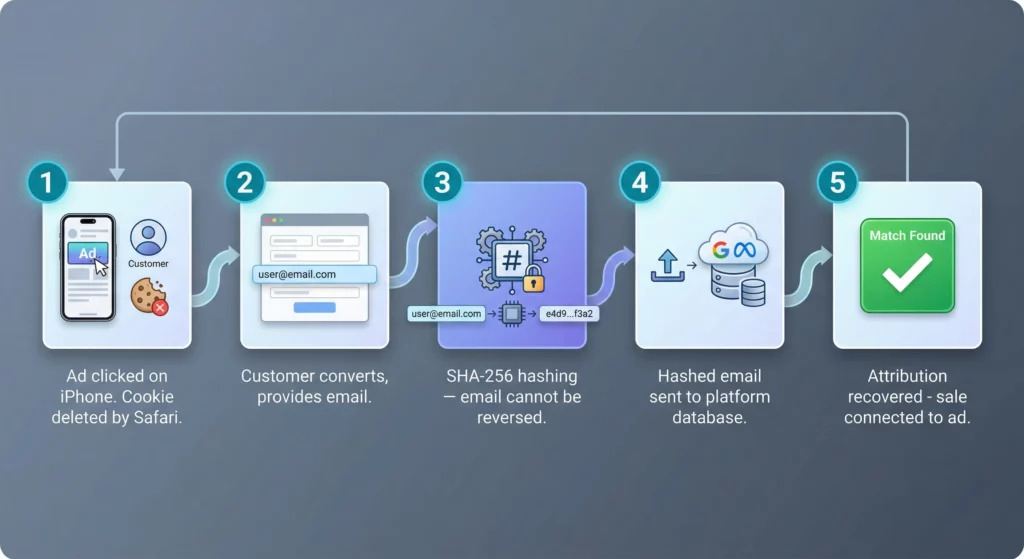

Enhanced Conversions: The Attribution Patch

Enhanced Conversions (EC) is one of the most frequently misunderstood tools available. It’s not a driver of lead quality or a targeting improvement. It’s an attribution patch that helps platforms claim credit for conversions they would otherwise miss.

Here’s the mechanism. When someone converts on your website, you capture first-party data they provided: email address, phone number, sometimes name and address. You hash this data (encrypt it using SHA-256), and send it to the ad platform. The platform matches this hashed data against its own user database (like signed-in Google accounts or Facebook profiles).

The benefit is attribution repair. It allows the platform to claim credit for conversions that happened on a device where cookies were deleted or blocked. The user clicked your Google ad on their iPhone last week, but Safari deleted the cookie after 24 hours. They return to your site and buy. Without Enhanced Conversions, Google has no idea this sale came from their ad. With Enhanced Conversions, Google matches the hashed email to their user database and connects the dots.

Implementation of Enhanced Conversions is considerably easier than full Server-Side Tracking. It offers a significant improvement in attribution fidelity with lower technical overhead. For most businesses, this is “low-hanging fruit” that should be implemented immediately.

The privacy nuance: this approach requires an update to your privacy policy, because you’re technically sharing customer data (even if hashed) with a third party. The hashing means Google or Facebook cannot reverse-engineer the email address, but you’re still transmitting a data point derived from customer information. Be transparent about this in your disclosures.

First-Party and Zero-Party Data Strategy

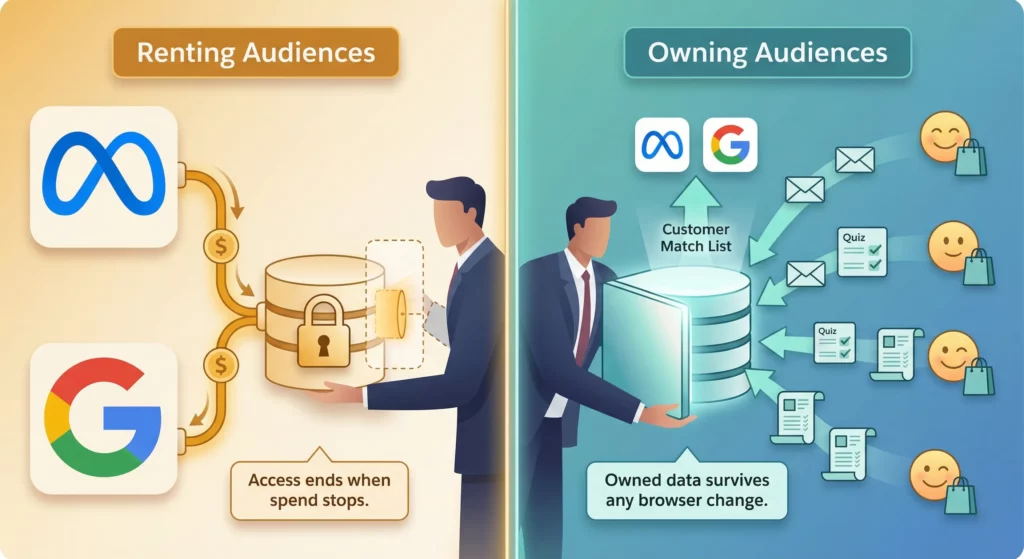

The ultimate hedge against browser privacy changes is owning your customer data directly. This means building assets that belong to you, not renting audiences from platforms.

First-Party Data is information you collect directly from customers with their consent: purchase history, website behaviour, support interactions, product preferences. This data lives in your own database or CRM, not in Facebook’s servers.

Zero-Party Data is even more valuable. This is information a customer intentionally and proactively gives to your brand to improve their experience. Examples include quiz answers (“Do you have dry or oily skin?”), preference centre selections (“Email me about new arrivals but not sales”), or explicit feedback (“I’m shopping for my teenage daughter”).

Zero-party data is extraordinarily valuable because it’s explicit, accurate, and completely privacy-compliant. You’re not inferring that someone has acne based on their browsing history (which Safari blocks anyway). They’re telling you directly that they have acne and want products to help.

The strategic shift is moving from “renting audiences” (using Facebook’s targeting based on their surveillance) to “owning audiences” (building email lists, SMS subscribers, and customer databases). This creates “signal resilience” (the ability to upload customer lists (Customer Match in Google, Custom Audiences in Meta) to re-seed the advertising algorithms even when pixels fail).

Here’s a practical example. A skincare brand implements a “Skin Type Quiz” on their website. The quiz collects explicit data on skin concerns: acne, ageing, dryness, sensitivity. Instead of relying on Facebook to infer who has acne based on external browsing history (which is now blocked), the brand has the explicit data point directly from the customer.

They can then upload a hashed list of “Acne-Concerned Customers” to Facebook to create a high-fidelity Lookalike Audience. This completely bypasses tracking restrictions because the seed data is owned, not rented. Facebook isn’t surveilling behaviour across the web. You’re giving them a precise list of qualified customers and asking them to find similar users based on Facebook’s internal signals (demographic patterns, interest patterns, on-platform behaviour).

This approach is privacy-compliant, highly effective, and resilient to any future browser changes.

Redefining How You Measure Success

As deterministic attribution dies, you need to adopt aggregate measurement models. The question “Which exact ad drove this specific sale?” is becoming increasingly unanswerable. The new questions you need to ask are “How efficient is my total marketing spend?” and “What is the correlation between my spending and my revenue?”

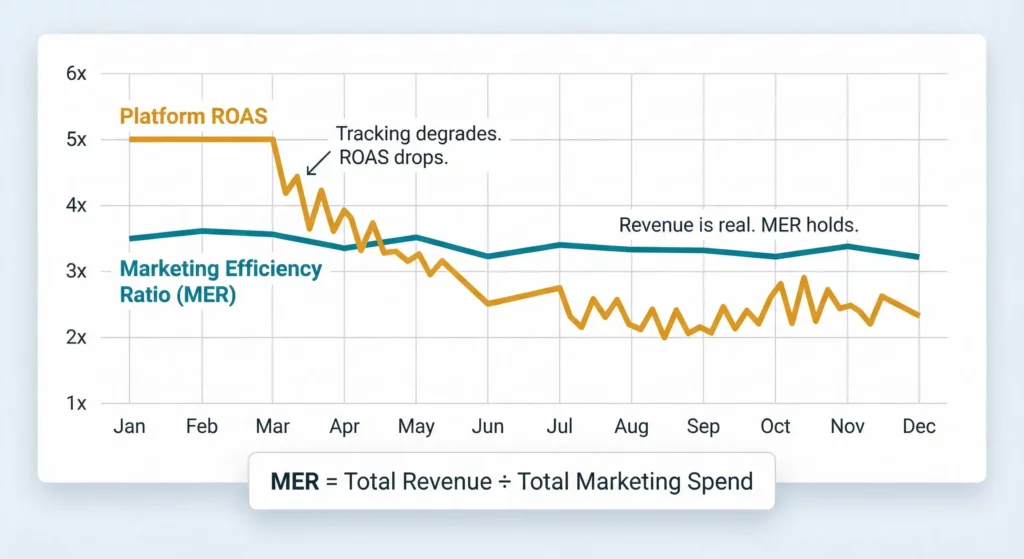

Marketing Efficiency Ratio: Your North Star Metric

For business owners navigating the signal loss era, the most honest metric available in 2025 is Marketing Efficiency Ratio (MER). The calculation is simple: Total Revenue divided by Total Marketing Spend.

Here’s the strategic logic. MER ignores attribution wars entirely. It doesn’t care whether Facebook claims the sale or Google claims the sale or if they both claim the same sale. It simply asks: “For every $1 we spent on marketing across all channels, how many dollars entered our bank account?”

This provides strategic value by preventing you from turning off profitable advertising just because a Last-Click attribution model in GA4 says it isn’t working. MER gives you a blended view of reality that remains stable even as cookie-based tracking crumbles.

What’s a healthy MER? This depends on your profit margins, but a common benchmark for e-commerce businesses is 3.0-4.0 (meaning every $1 in marketing spend generates $3-4 in revenue). Service businesses with higher margins might target 4.0-6.0. Lower-margin businesses might accept 2.0-3.0.

Here’s where MER becomes strategically critical. It’s common for platform-reported ROAS to drop while MER remains stable. When you see this pattern, it means your ads are still working, but the tracking is failing. If you react to the dropping ROAS by cutting spend, you’ll watch your MER crash. This proves the ads were driving “invisible” revenue that only showed up in your bank account, not in your dashboards.

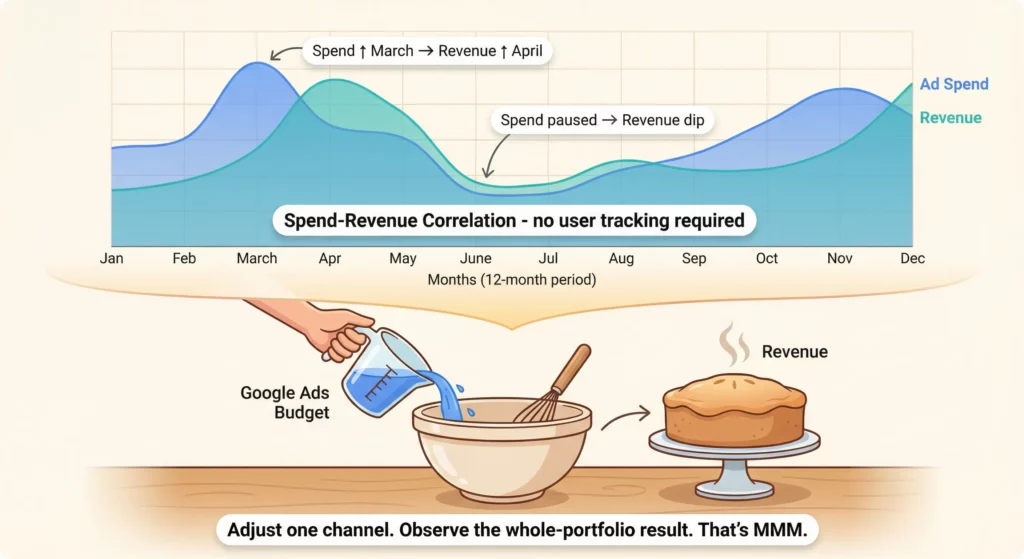

Media Mix Modelling for Everyone

Historically, Marketing Mix Modeling (MMM) was a statistical tool reserved for Fortune 500 companies with massive budgets and dedicated data science teams. The complexity and cost made it inaccessible to small and medium businesses.

That’s changing rapidly. Simplified tools and even spreadsheet templates are making MMM accessible to businesses of all sizes.

The mechanism works like this. MMM uses your historical data to find correlations between spending peaks and revenue peaks. It’s analogous to baking a cake: you change the amount of sugar (your Google Ads budget) and observe how the taste (your revenue) changes, without needing to track every individual grain of sugar.

You look at 12-24 months of data and identify patterns. When you increased Facebook spend in March, did revenue increase proportionally? When you paused Google Ads in June, did revenue drop? The statistical model identifies which channels have the strongest correlation with revenue growth, accounting for external factors like seasonality.

The privacy advantage of MMM is enormous. Because it uses aggregate data (total spend versus total revenue) rather than user-level tracking, it’s completely immune to privacy laws, browser restrictions, and signal loss. Safari can strip every tracking parameter it wants. Your MMM model doesn’t care because it’s measuring at the macro level.

This approach is the future of resilient measurement. It accepts that individual-level attribution is dying and focuses instead on understanding channel effectiveness at the portfolio level.

Your Action Plan: From Blind to Resilient

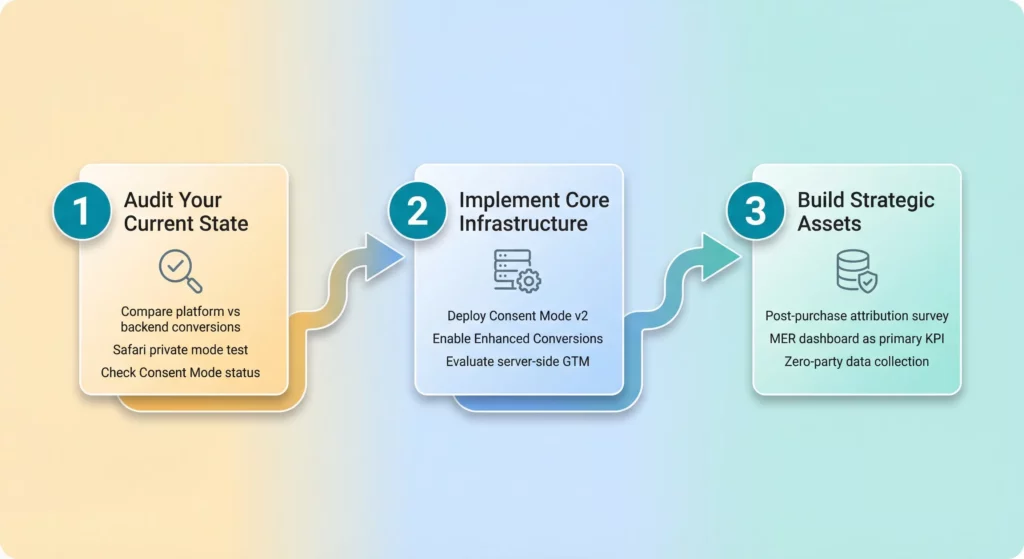

The transition from “flying blind” to “data resilience” requires a structured approach. This isn’t a task you can delegate entirely to your IT department or agency. It requires strategic oversight because the decisions you make will fundamentally affect your advertising effectiveness and profitability.

Step 1: Audit Your Current State

Start with a signal loss assessment. Compare your ad platform conversion data (Google Ads, Meta Ads Manager) against your backend sales data (Shopify, WooCommerce, CRM) for the last 30 days. Calculate the discrepancy percentage. If the gap exceeds 15-20%, you have a signal loss crisis that’s actively costing you money.

Conduct a browser inspection test. Open Safari on an iPhone in Private Mode and visit your website by clicking a Google ad (you can trigger your own ads by searching for your brand plus product category). Watch the URL bar carefully after the page loads. If the gclid parameter or UTM parameters disappear, your attribution is being actively stripped. This is a clear sign that Safari’s Link Tracking Protection is killing your tracking.

Verify your consent implementation. Check whether your current cookie banner allows Consent Mode signals. If you operate in the EU or UK and use Google Ads, confirm that Consent Mode v2 is active and properly configured. Without this, Google will cut off your access to remarketing audiences and conversion data will degrade further.

If you use third-party attribution tools like Northbeam or Triple Whale, review their “Lift” reports to see the delta between platform-reported ROAS and actual multi-touch attribution. Northbeam case studies have shown that accurate attribution can reveal a 44% lift in new customers that native platform reporting completely missed.

Step 2: Implement Core Infrastructure

Deploy Consent Mode v2 immediately. Work with your developer, agency, or website platform support to implement Advanced Consent Mode. Ensure that “cookieless pings” are firing when users deny consent. This is the baseline for data recovery and should be treated as non-negotiable infrastructure.

Enable Enhanced Conversions in Google Ads and the Conversions API (CAPI) in Meta. This is the quickest “patch” for attribution problems and can be implemented in a matter of hours to days, depending on your technical setup. The impact on data quality is immediate and substantial.

Evaluate Server-Side Google Tag Manager (sGTM) for your business. If your monthly ad spend exceeds $5,000, begin planning the migration to server-side tracking. This investment typically pays for itself within 3-6 months by recovering 10-20% of lost conversions and improving signal quality, which lowers your CPA. For businesses spending below $5,000 monthly, prioritise Consent Mode v2 and Enhanced Conversions first, then revisit sGTM as you scale.

Step 3: Build Strategic Assets

Implement first-party data collection loops. The simplest high-value addition is a post-purchase survey that asks “How did you hear about us?” with multiple choice options covering all your marketing channels plus options like “Friend/Family,” “Social Media (organic),” and “Other.” This zero-party attribution data often reveals the impact of “dark social” channels (links shared in WhatsApp, Slack, or private messages) that pixels cannot see at all.

Create a MER dashboard that tracks Total Revenue divided by Total Marketing Spend on a weekly basis. Make this the primary KPI for executive and marketing reviews. Relegate platform-reported ROAS to a secondary diagnostic metric. This shift in focus prevents reactive decisions based on incomplete attribution data.

Develop zero-party data collection mechanisms. Introduce product quizzes, preference centres, or interactive tools that collect explicit customer data. A furniture company might ask “Are you furnishing a bedroom, living room, or office?” A supplement brand might ask “What are your primary health goals?” This explicit data reduces reliance on algorithmic inference and creates owned audience segments you can use for targeting.

Use the stability of MER to test channels that are traditionally hard to track. Podcast advertising, influencer partnerships, and content collaborations often lack clean “click” pixels. In the old world, this made them terrifying to test. With MER as your guide, you can increase spending in these channels and watch whether your overall efficiency improves, without needing perfect attribution.

Moving Forward with Confidence

The signal loss crisis is not a temporary disruption. It’s the new permanent reality of digital marketing. Chrome’s “User Choice” era and Safari’s aggressive protection measures have fundamentally broken the old tools of measurement. These changes aren’t being rolled back.

However, this isn’t a dead end for your business. It’s a filter.

Businesses that cling to the old model of unrestricted tracking will find their costs rising and their visibility vanishing. They’ll continue flying blind, making decisions based on incomplete data and modelled reports they don’t trust. They’ll second-guess every campaign, pull back on marketing spend out of fear, and gradually lose their competitive position.

Conversely, businesses that embrace the architectural shift to privacy-first infrastructure will build a competitive advantage. By implementing Consent Mode v2, migrating to Server-Side Tracking, deploying Enhanced Conversions, and building first-party data assets, you’ll measure what your competitors miss. You’ll bid with confidence when others pull back. You’ll own the relationship with your customers instead of renting access through surveillance-based platforms.

The missing pieces aren’t buried in legal documents or dry technical manuals. They’re the strategic understanding that data is now a probability, not a certainty. Perfect attribution is gone. What remains is the ability to build resilient measurement frameworks that give you directional confidence even when individual-level tracking fails.

By following this roadmap, you move from the chaos of signal loss to the clarity of resilient growth. You’re no longer flying blind. You’re navigating by instruments you trust, making decisions based on aggregate truth rather than fragmented pixels. Your marketing becomes sustainable, your growth becomes predictable, and you build a foundation that withstands whatever privacy changes come next.